|

3/5/2023 0 Comments Gradient descent

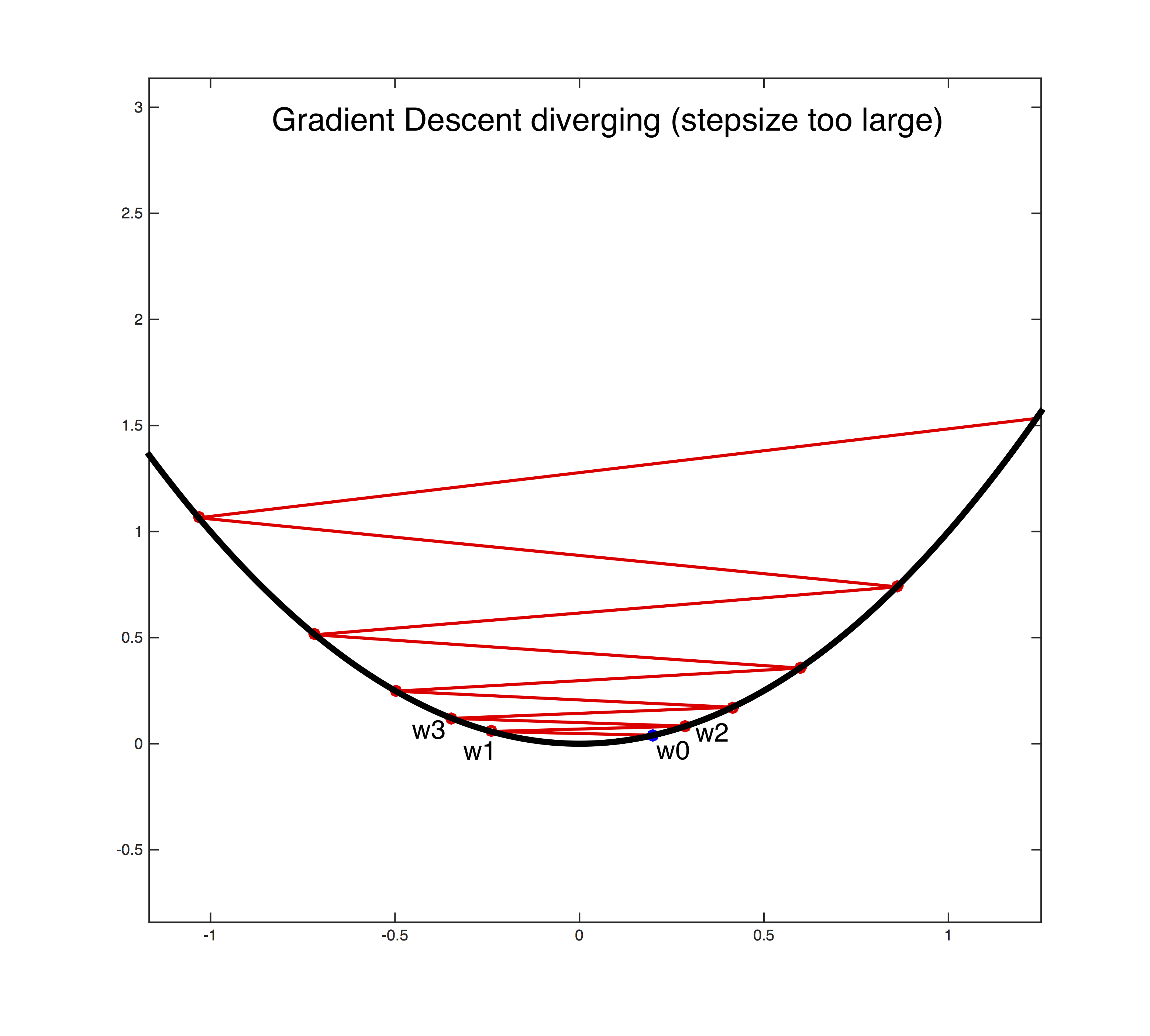

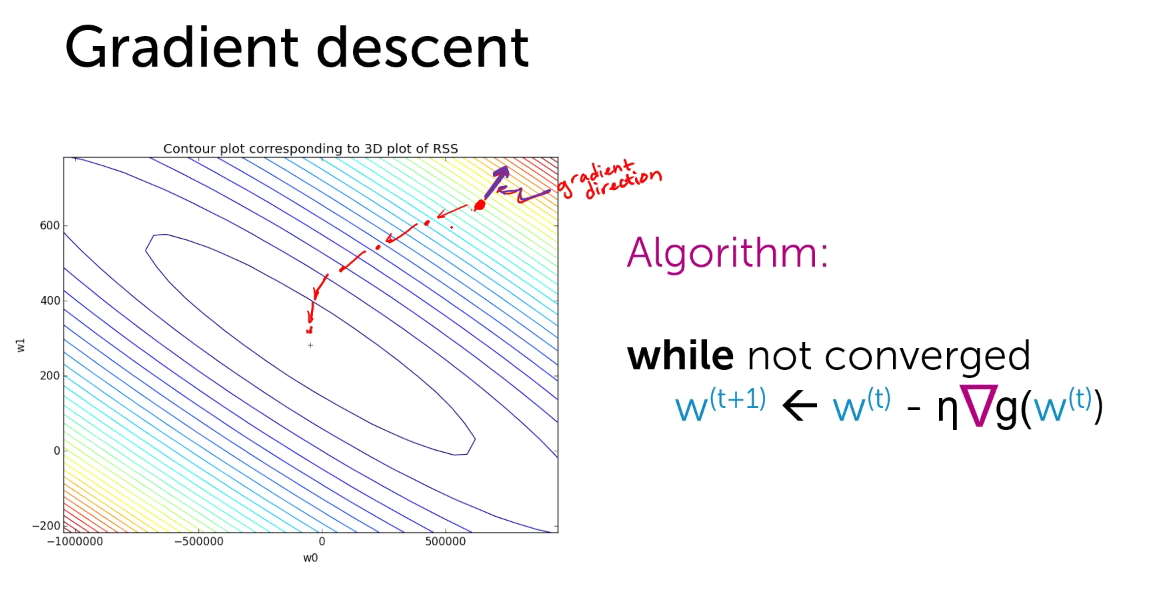

If the learning rate is reduced too slowly, you may jump around the minimum for a long time and still not get optimal parameters.If the learning rate is reduced too quickly, then the algorithm may get stuck at local minima, or it might just freeze halfway through the minimum.So, the learning rate is initially large(this helps in avoiding local minimum) and gradually decreases as it approaches the global minimum. One approach to the problem of stochastic gradient descent not being able to settle at a minimum is to use something known as a learning schedule.Įssentially, this gradually reduces the learning rate. However, due to its stochastic nature, stochastic gradient descent does not have a smooth curve like batch gradient descent, and while it may return good parameters, it is not guaranteed to reach the global minimum. This is great because the calcuations are only needed to be done on one training example instead of the whole training set, making it much faster and ideal for large datasets. Here, instead of calculating the partial derivative for the whole training set, the calcuation of the partial derivative is only done on one random sample(stochastic meaning random). While there are many different feature scaling methods available, we will build our custom implementation of a MinMaxScaler using the formula: Feature scaling can also increase the speed of an Algorithm.Feature scaling can also be used to normalise data that has a wide range of values.If an algorithm uses Euclidean distance, then feature scaling is required as the Euclidean distance is sensitive to large magnitudes.You want to keep the shape of the building, but want to resize it to a smaller scaleįeature scaling is commonly used in the following scenarios:.Essentially, the features are brought down to a smaller scale and the features are also in a certain range. mean_squared_error(np.dot(X,thetas.T),y) OUT: 592.14691169960474 cost_function(X,y,thetas) OUT: 592.14691169960474įantastic, our cost function works! Feature Scalingįeature scaling is a preprocessing technique that is essential for linear model(Linear Regression, KNN,SVM).

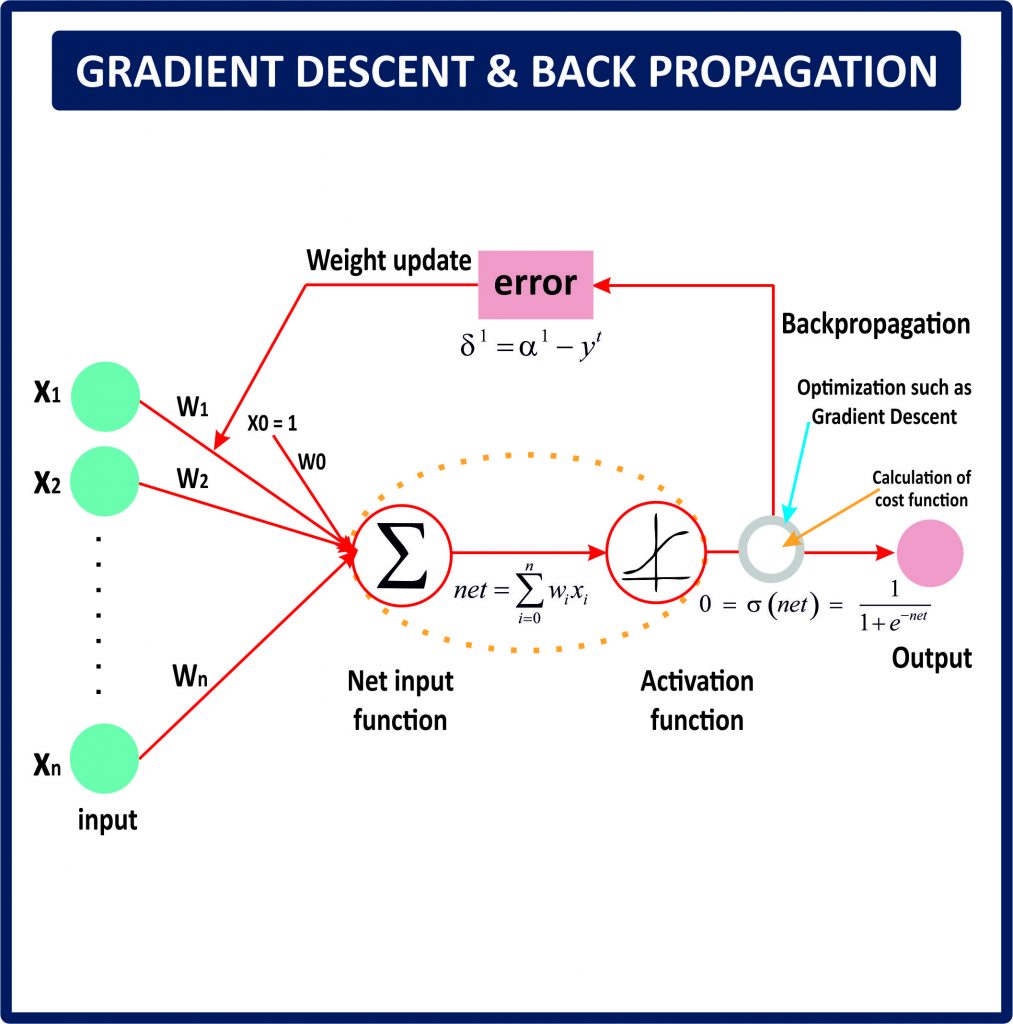

In order to do this, we will use scikit-learn’s mean_squared_error, get the result, and compare it to our algorithm. Great, now let’s test our cost function to see if it really works. We will also be constructing a linear model from scratch, so hand tight, because you are about to enter a whole new world! We will be using the famous Boston Housing Dataset, which is prebuilt into scikit-learn. Further explanations of unclear parts of the code.A brief overview of what each algorithm does.To achieve this task, the format of the article will look like the following: While theory is vital and crucial to gain a solid understanding of the algorithm at hand the actual coding of Gradient Descent and its different “flavours” may prove a difficult yet satisfying task. Therefore, we must also gain a solid understanding of these algorithms too, as they have a few additional hyperparameters that we will need to understand and analyse when our algorithm is not performing as well as we expect it to. Two other popular “flavours” of gradient descent(stochastic and mini-batch gradient descent) build off the main algorithm and are probably the algorithms you will witness more than plain batch gradient descent. However, Gradient Descent is not limited to one algorithm. Instead of playing around with hyperparameters and hoping for the best result, you will actually understand what these hyperparameters do, what is happening in the background and how you can tackle issues you may face in using this algorithm. A solid understanding of the principles of gradient descent will certainly help you in your future work. We will use the gradient descent algorithm to estimate these parameters so as to minimise this loss function.Gradient Descent is fundamental to Data Science, be it deep learning or machine learning. Where \(y\) is a variable to predict (or target variable, or response variable), \(m(\cdot)\) is an unknown model, \(\boldsymbol\) are the estimates of \(\beta_0\) and \(\beta_1\), respectively. 6.1.1 Neural Network with a Single Hidden Layer.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed